StoriesLM-v2: A Family of Language Models With Time-Indexed Training Data

May 11, 2026

How does a language model behave when it has only seen the past?

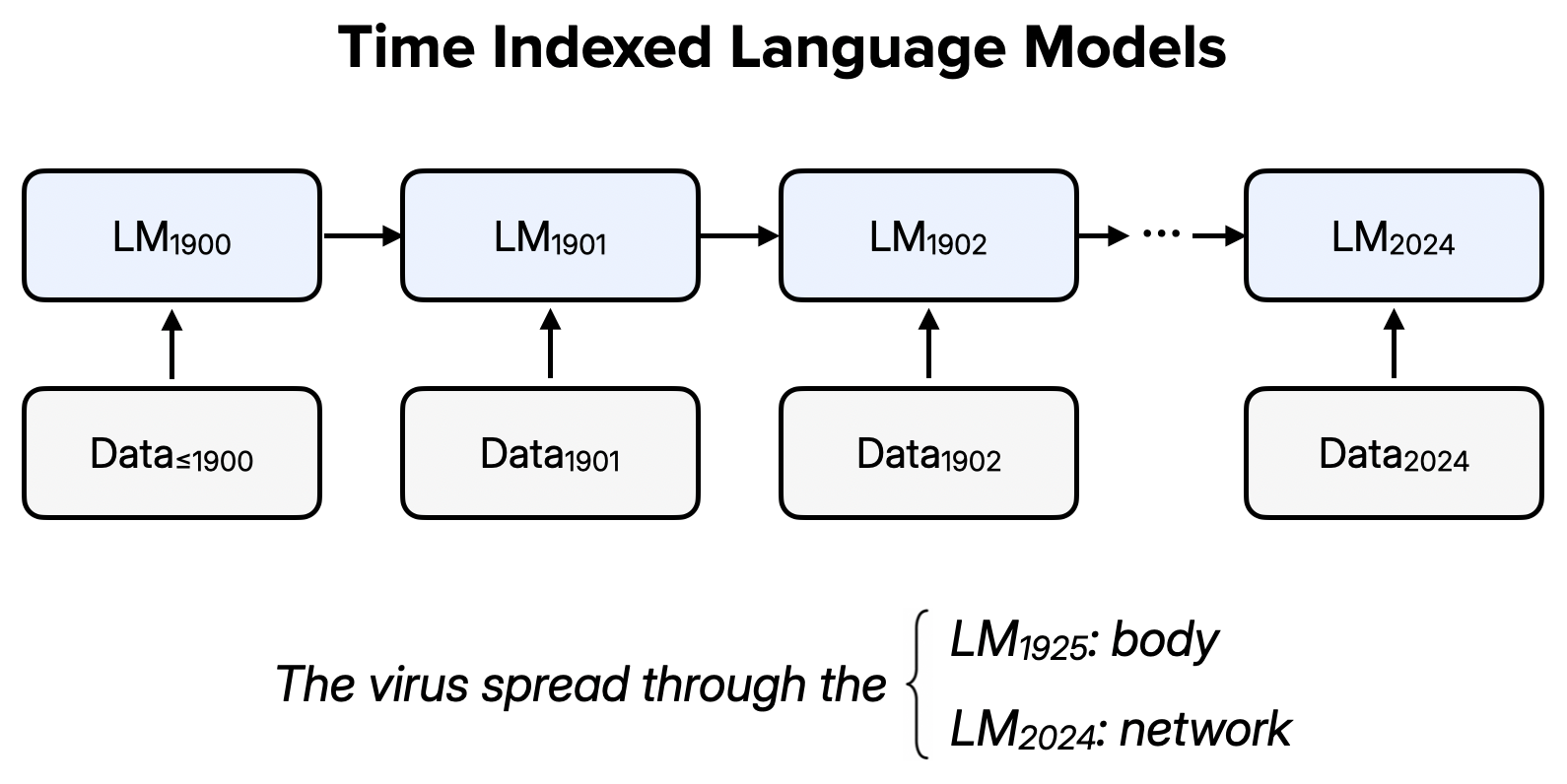

StoriesLM-v2 is a family of 125 encoder-only language models trained on an expanding sequence of historical language data. It is a class of "time indexed" or "vintage" language models, where each model is trained on language produced up to a specific year.

I originally became interested in vintage language models because of the lookahead bias problem in financial forecasting (Glasserman and Lin, 2023, Sarkar and Vafa, 2024, Levy, 2026). Suppose a model trained on language up to the present performs well in a backtest. Is the model reasoning well, or is it just memorizing the past? StoriesLM-v1 (named after the American Stories dataset) was an early effort to address this issue, by building a sequence of 64 language models with rolling knowledge cutoffs.

I recently revisited this topic after reading new research on vintage language models (Evans, 2024, He et al., 2025, Duderstadt and Helm, 2025, Levine, Duvenaud, and Radford, 2026), as well as thinking more about language embeddings and semantic change (Hamilton, Leskovec, and Jurafsky, 2016, Kutuzov et al., 2018) and vintage language models for scientific discovery (Hassabis, 2026). StoriesLM-v2 expands StoriesLM-v1 to a family of 125 sequentially trained, encoder-only language models.

Semantic Change

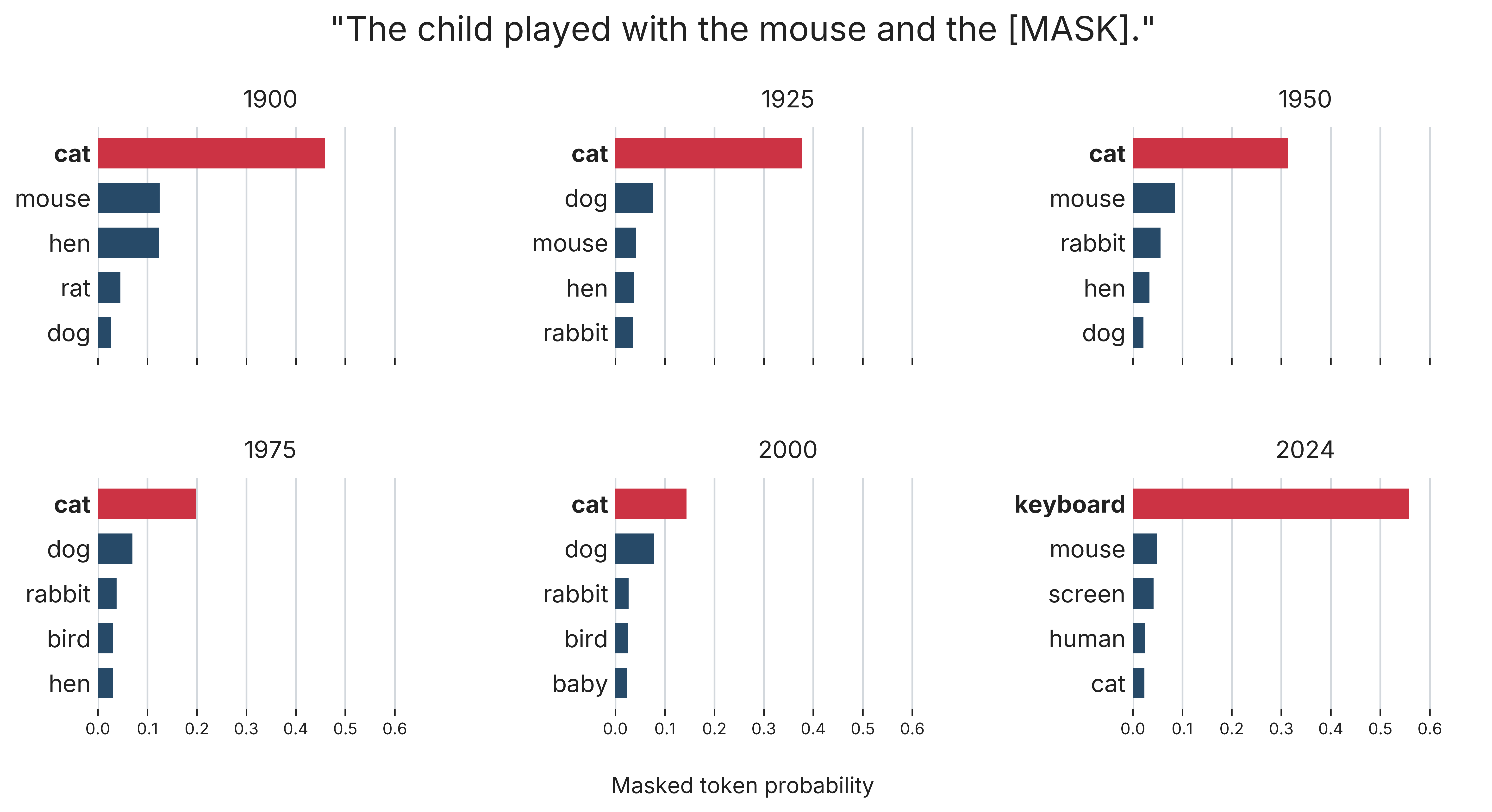

Here are some examples of how masked token probabilities vary across model vintages.

The associations of "mouse" shift from animal in the 1900 vintage to technology in the 2024 vintage. The 2000 vintage does not exhibit such a strong shift, likely because training data coverage is thin during that period.

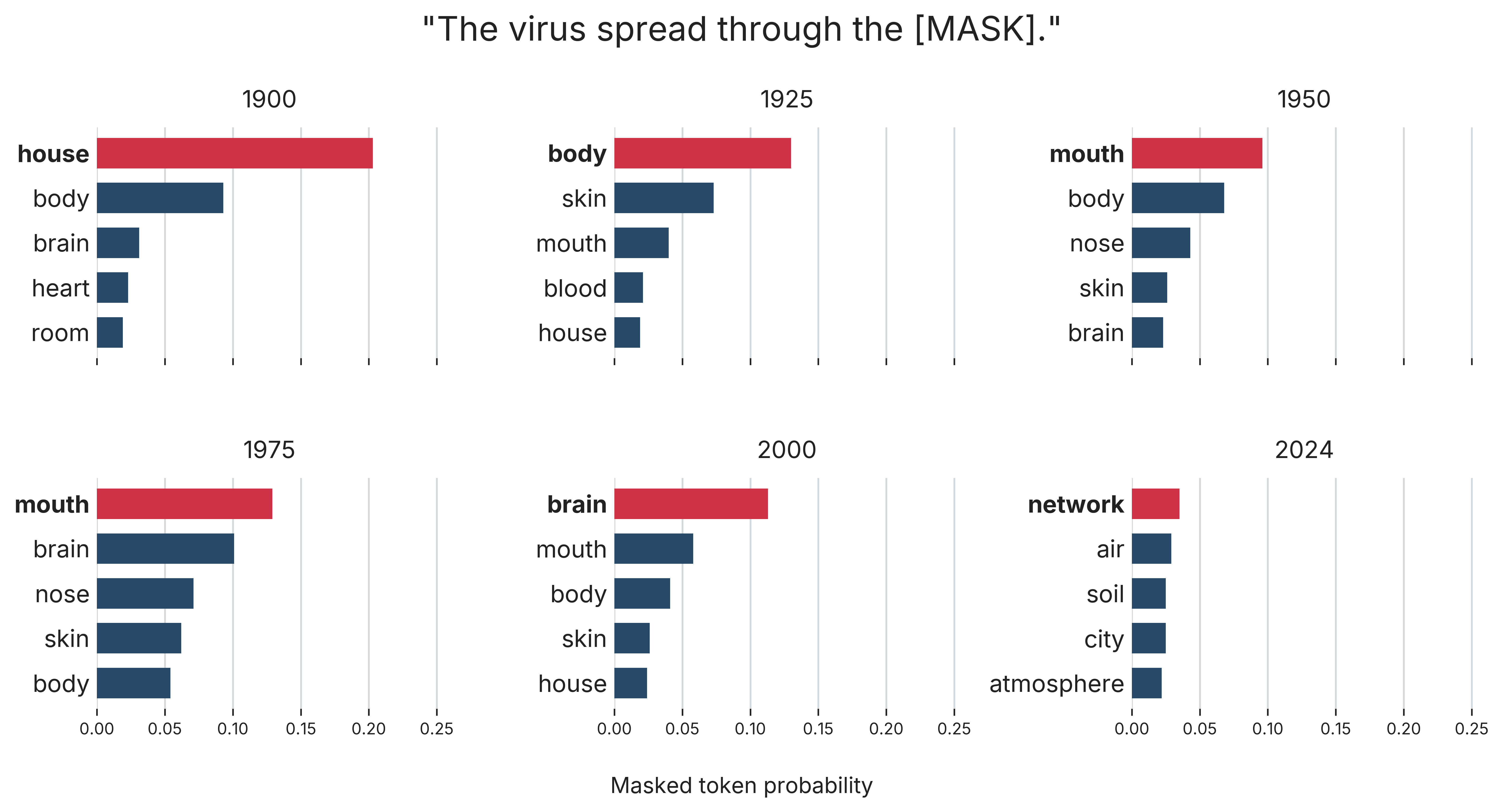

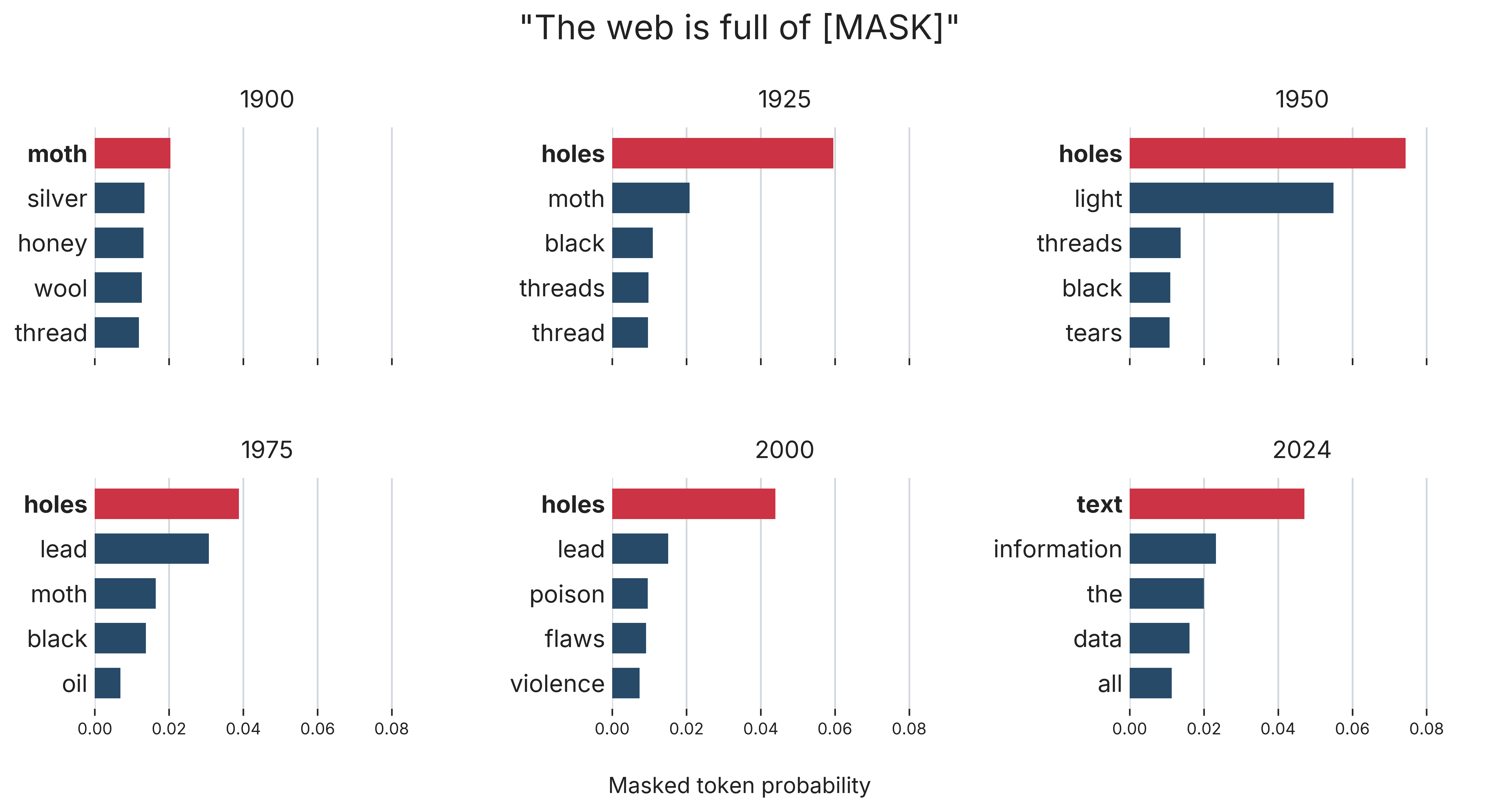

We see similar shifts in these two examples:

- “virus”: body → network

- “web”: moth → text

Data and Training

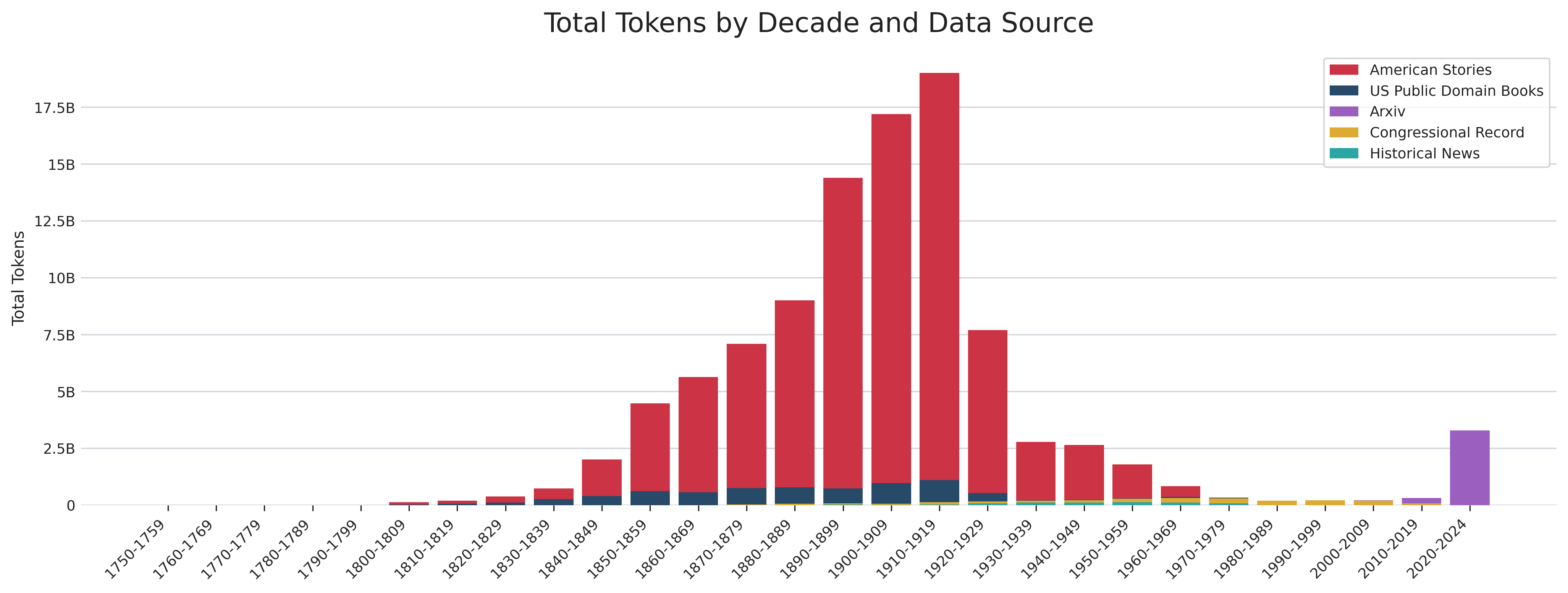

The models are trained on five data sources that range from 1750–2024:

- American Stories: Articles from historical US newspapers in the Library of Congress's Chronicling America collection (Dell et al., 2023).

- The US public domain books portion of Common Corpus (Langlais et al., 2025).

- A subset of the arXiv papers portion of the Common Pile (Kandpal et al., 2025).

- Congressional Record: Speech text (distributed by Gentzkow, Shapiro, and Taddy, 2018).

- Newswire: US wire service articles from 1878–1977 (Silcock et al., 2024).

These documents are further filtered by length (200–500,000 characters), language (using an English function word heuristic), quality (penalizing many digits, special characters, uppercase characters, and extreme word lengths), repetition (duplicate lines and n-grams), and likely OCR errors (including long consonant or vowel runs, and letter-digit strings). After processing, the corpus contains 281.9M documents and 358.5B characters. With the ModernBERT-base tokenizer, this corresponds to 100.8B training tokens.

Here is the distribution of tokens across time and data sources:

Data coverage is thin between 1960–2020, and I would like to add more training data from this period to the next version of this model family.

Training starts with a ModernBERT-base architecture initialized from scratch, with 512-token packed sequences and an MLM objective with masking probability 0.15. The initial model is trained on all documents dated 1750–1900 (45.8B tokens) for one epoch. Subsequent models are trained by continued pretraining for one epoch on the next year's documents, with no replay.

Embedding Model

RepresentLM-v2 is an embedding model built on top of StoriesLM-v2. It uses the HEADLINES dataset (Silcock, Arora, and Dell, 2023), which contains multiple headlines from historical newspapers matched to the same underlying article. This creates a contrastive training objective where two headlines pointing to the same article constitute positive examples. I map the 1979 StoriesLM-v2 checkpoint to a SentenceTransformers model with mean pooling and embedding normalization. I apply the same quality and OCR filters used for the MLM models to headlines dated 1920–1979 and sample headline pairs from articles with at least five matched headlines.

Next Steps

Training: I plan to train a family of generative models on this data. In addition, I would like to run ablations on several of the design choices (e.g., replay), and scale the models.

Data: Coverage is sparse between the end of the historical news dataset and beginning of the academic papers dataset, and I plan to expand the data sources used for training.

Experiments: There are open research questions around the behavior of continued temporal pretraining, and the uses of vintage language models for social science and scientific discovery.

Comments are very welcome! Get in touch.

Link to models: StoriesLM-v2, RepresentLM-v2